Traditional RAG has a problem. Not because it's broken — it works well, at least for simple queries. The problem is that enterprise questions are rarely simple.

How Traditional RAG Works — And Where It Breaks Down

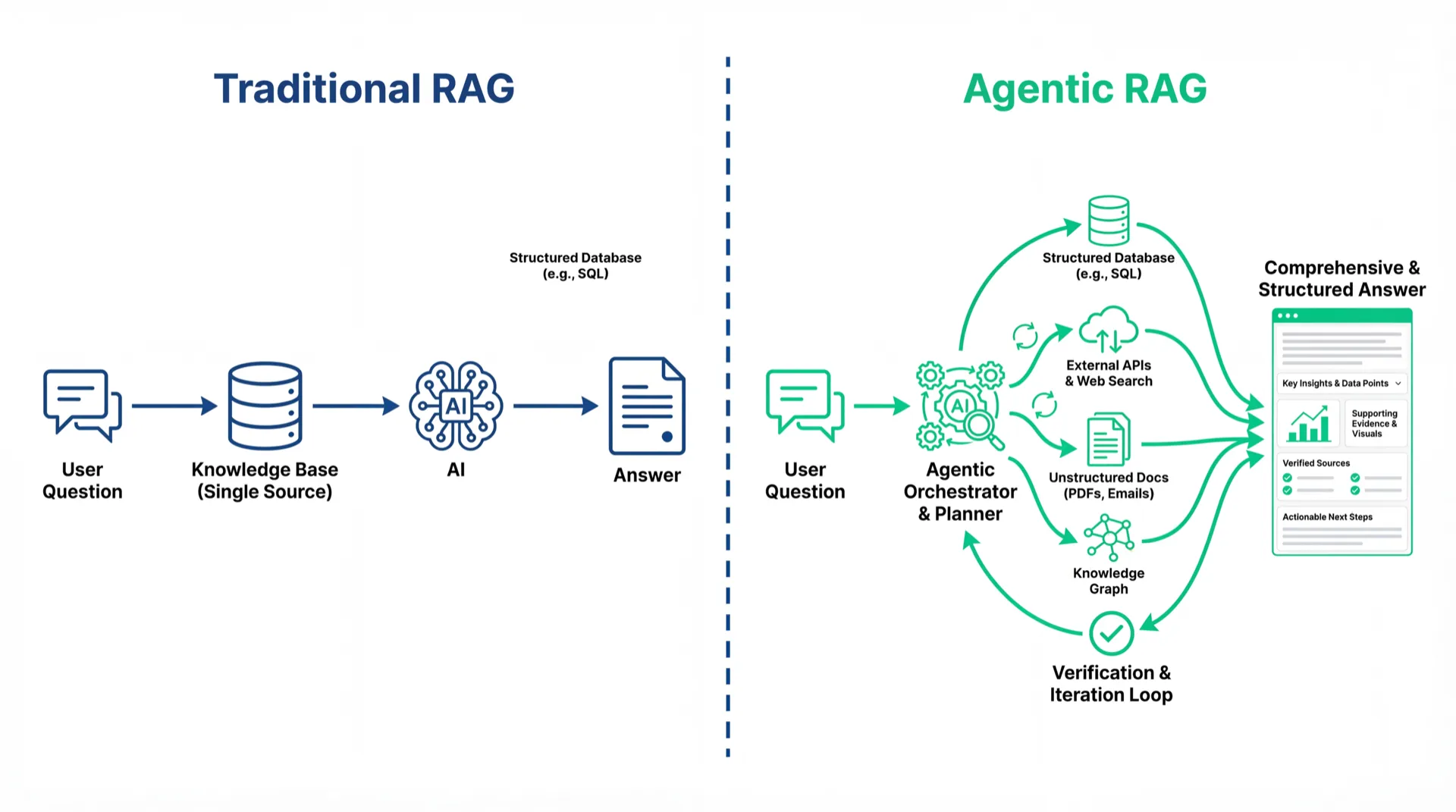

RAG (Retrieval-Augmented Generation) works like this: a user asks a question, the system searches a knowledge base for relevant document chunks, and an AI generates an answer from those chunks. For simple questions — "What is your return policy?" — this works fast, accurately, and cheaply.

But imagine a sales rep asking: "Have we worked with any manufacturer at TSMC's scale using on-premise deployment? How did the client evaluate us after the engagement?" Traditional RAG will search, retrieve some documents, and stitch together an answer. It cannot tell you whether the case it found is truly comparable to TSMC's scale, or whether "on-premise deployment" is confirmed fact or a planning option. Sounding reasonable and being accurate are two different things.

What Is Agentic RAG

Agentic RAG integrates AI Agent reasoning into the RAG retrieval pipeline, enabling the AI to actively plan retrieval strategies, integrate information from multiple sources, verify answer quality, and re-query when needed — rather than passively fetching documents.

NVIDIA offers a useful analogy: traditional RAG is like an outdated navigation app — it gets you to the destination, but doesn't know about today's road construction. Agentic RAG is a live navigation app that knows the traffic conditions and re-routes proactively.

The same sales question handled by each approach:

Traditional RAG: Keyword-searches "TSMC," "on-premise," "manufacturing," retrieves some document fragments, generates an answer. It cannot verify whether the case scale matches or whether the deployment type is confirmed. The answer sounds plausible but you wouldn't use it directly.

Agentic RAG: The Agent decomposes the question into three sub-queries: client industry and scale, deployment type, and post-engagement records. It queries the case database, deployment logs, and client tier data separately, cross-references them, and generates an answer with a source citation for each key fact.

The three capabilities traditional RAG lacks:

Memory: Traditional RAG treats every Q&A as independent. Agentic RAG maintains conversational context across multi-turn dialogues.

Intelligent routing: Agentic RAG determines which sources to query, queries them separately, then synthesizes results. Traditional RAG queries one source.

Tool calling: Agentic RAG can call external APIs and query live databases. Traditional RAG is limited to its static knowledge base.

The Accuracy Gap

IBM Research shows that traditional RAG output quality degrades significantly when queries involve multi-step reasoning or cross-source integration. NVIDIA's testing shows Agentic RAG architectures can deliver approximately 50% accuracy improvement in complex retrieval scenarios.

MaiAgent's Agentic RAG deployments in manufacturing and financial services maintain 95%+ answer accuracy even with knowledge bases exceeding 100,000 documents — a scale where traditional RAG accuracy typically falls to 60–70%, meaning one in three or four answers would require human correction in a customer service context.

Is Traditional RAG Still Useful?

Yes. Traditional RAG is cheaper, faster, and architecturally simpler. For single-source, fixed-answer, straightforward question types, it remains the right choice. But when the AI starts giving answers that "sound reasonable but aren't quite right," when employees stop trusting the system's responses, or when question complexity exceeds single-source retrieval — that's the system telling you it's time to upgrade. Agentic RAG doesn't replace RAG; it makes RAG genuinely useful in more complex scenarios.

Frequently Asked Questions

What is the biggest difference between Agentic RAG and traditional RAG?

Traditional RAG follows a linear "query → retrieve → generate" flow. Agentic RAG adds an AI Agent that can reason across multiple iterations — deciding which sources to query, verifying answer quality, and re-querying when needed. Simply put: traditional RAG is reactive, Agentic RAG is proactive.

Does every enterprise need Agentic RAG?

Not necessarily. If your knowledge base handles single-source, straightforward question types, traditional RAG may be sufficient. Agentic RAG's advantages appear in complex, multi-source, multi-step reasoning scenarios. The practical test: if your employees get AI answers they then need to verify themselves, it may be time to upgrade.

Is Agentic RAG more expensive than traditional RAG?

Yes — it requires more AI reasoning steps, increasing token usage and compute costs. But this needs to be weighed against the value of accuracy improvements. A wrong answer causing an employee two hours of verification work costs far more than a few extra tokens.

Does adopting Agentic RAG require rebuilding the entire knowledge base?

No. Agentic RAG enhances the retrieval and reasoning layers on top of your existing knowledge base. Your documents, databases, and historical records continue as-is — what changes is how the AI queries and synthesizes them.

Sources

NVIDIA Technical Blog — RAG 101: Demystifying Retrieval-Augmented Generation Pipelines (Agentic RAG 50% accuracy improvement data)

IBM Research — Retrieval-Augmented Generation (traditional RAG quality degradation in complex queries)

MaiAgent x Advantech — One-Stop AI Solution (manufacturing and financial deployment case data)