The Invisible Security Breach in Your Office

Ask any IT director whether their company uses AI and you'll usually hear: "We're still evaluating." Then walk to any desk in that same office, glance at the open browser tabs, and you'll almost certainly see ChatGPT.

This is the reality of Shadow AI — employees who don't wait for corporate approval before adopting tools that make their jobs easier. According to Salesforce research, 69% of employees worldwide admit to using AI tools their companies haven't authorized. The problem isn't malicious intent. The problem is that when sensitive data flows into third-party AI services, the enterprise has no visibility and no control.

The Samsung Incident: A Wake-Up Call No CISO Can Ignore

In 2023, Samsung engineers used ChatGPT to help write code — and in the process, pasted internal source code, meeting notes, and confidential technical documentation into the chat window. That data potentially became training material for OpenAI's models. Once sent, there was no recall.

Samsung responded by banning ChatGPT company-wide. But blocking is never a real solution. Employees without approved tools simply migrate to other unmanaged platforms: Gemini, Claude web, personal Copilot accounts. The shadow AI universe is far larger than any IT blocklist can contain.

The Samsung case reveals a fundamental contradiction: AI productivity gains are real, employee demand is real, and your enterprise data perimeter is quietly dissolving.

Why Blocking Always Fails

Blocking feels intuitive from an IT governance perspective. But it has three fundamental flaws.

First, it can't stop personal devices. Employees can use their phones and home computers, then paste AI-generated content back into work systems. This behavior is nearly impossible to track.

Second, it creates competitive disadvantage. Gartner projects that 40%+ of enterprises will experience significant AI-related security incidents by 2030. But equally important: companies that successfully deploy enterprise AI are pulling ahead in employee productivity.

Third, blocking doesn't address the underlying need. Employees turn to shadow AI because they don't have an approved alternative. The answer isn't prohibition — it's providing a better, sanctioned option.

Cyberhaven's research estimates shadow AI exposes the average enterprise to roughly $5.2 million annually in compliance risk.

What Enterprises Actually Need: Governed AI, Not Banned AI

The real challenge facing IT and security teams is this: how do you let employees use AI safely and legally, without surrendering data sovereignty?

Three conditions need to hold simultaneously.

Data stays in-house. AI inference must happen on the enterprise's own infrastructure, or in a private cloud environment with explicit data processing agreements. What employees ask, what documents they upload, what answers they receive — all of this should stay within the enterprise's control boundary.

Knowledge has walls. Enterprise AI should only answer questions based on the enterprise's own knowledge base and document library, not from public internet sources.

Usage is logged. Who asked what, what answers were given, which documents were cited — all of this should have a complete audit trail for compliance verification.

MaiAgent AI KM: The IT-Approved Internal AI

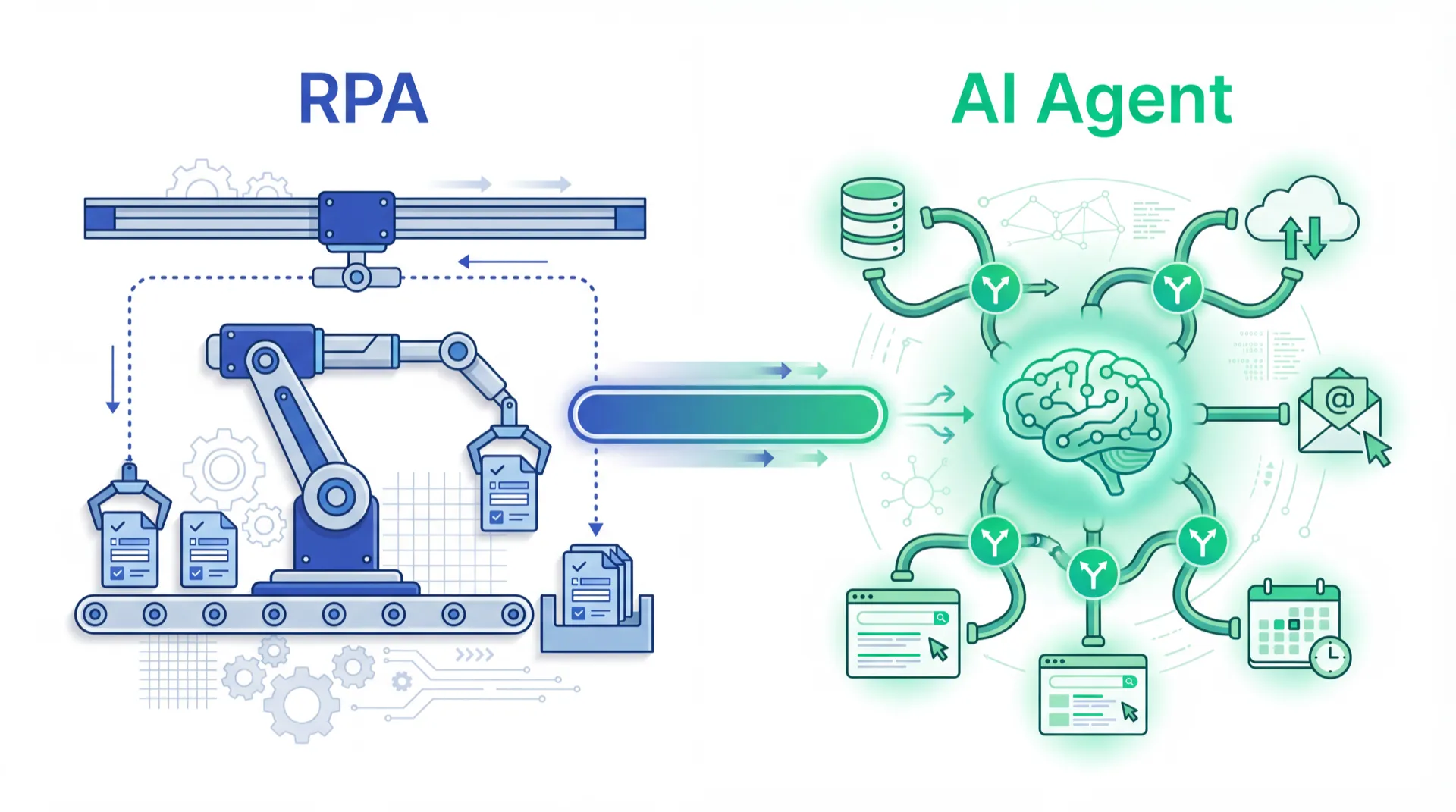

MaiAgent's AI KM (Knowledge Management) is purpose-built for this problem. It's not a general-purpose chatbot — it's an intelligent Q&A system built on top of the enterprise's own knowledge base, using an Agentic RAG (Retrieval-Augmented Generation) architecture to ensure every answer is grounded in specific internal documents.

On the deployment side, MaiAgent supports private cloud and on-premise deployment. Enterprise data never needs to leave your servers. On the knowledge management side, AI KM lets enterprises upload internal documents in any format with source citations in every answer. On the governance side, IT administrators can configure access, review Q&A logs, and adjust the AI's scope immediately.

From "Block AI" to "Govern AI"

More enterprise security professionals are recognizing that AI governance and data governance are two sides of the same problem. You can't solve shadow AI with technical blocks alone. You need a framework that makes AI usage visible, controllable, and auditable.

The core questions aren't "should we let employees use AI?" They are: When employees use AI, where does our data go? Are AI answers based on trusted internal knowledge? When errors or disputes arise, can we trace what happened?

MaiAgent's approach is straightforward: rather than letting employees secretly use ChatGPT, give them a better tool — one that only knows company knowledge, only operates in the company environment, and cites its sources every single time.

An Action Checklist for IT Leaders

Step one: inventory the reality. Use network traffic analysis or employee surveys to understand which AI services are already being used informally.

Step two: assess the risk. For the most commonly used shadow AI tools, review their data processing policies.

Step three: provide an alternative. Rather than purely blocking, proactively offer an IT-approved AI tool.

Step four: build a policy. Establish a clear enterprise AI use policy — what tools are approved, what data should never be entered into AI systems.

Shadow AI isn't going away. But it can be governed — as long as you choose governance over prohibition.

Want to give employees a governed AI assistant — without the security risk? Talk to a MaiAgent consultant →

Frequently Asked Questions

How is shadow AI different from a conventional data breach?

Traditional data breaches typically involve malicious attacks or employee mistakes that result in data being stolen. Shadow AI is different — employees are actively inputting data into third-party services, usually with good intentions, but with the same practical effect of moving sensitive information outside the controlled environment.

Can enterprises just use ChatGPT Enterprise or Microsoft Copilot instead?

Both products offer better data protections than their consumer counterparts, but they remain public cloud services. For enterprises with data sovereignty requirements or those working with locally sensitive data, these services may not fully satisfy regulatory requirements.

How does MaiAgent AI KM ensure data isn't used for AI training?

MaiAgent uses on-premise or private cloud deployment architecture, with enterprise data stored entirely on the customer's own infrastructure. MaiAgent's model inference service is separated from customer data and never uses customer Q&A records or knowledge base content for any form of model training.

How long does it take to deploy an enterprise AI KM system?

Depending on enterprise scale and knowledge base complexity, standard deployment typically completes in 2 to 6 weeks. MaiAgent provides end-to-end implementation services from document ingestion and knowledge base organization to employee training.