When AI Confidently Says the Wrong Thing

Have you ever asked an enterprise AI about an internal process and received an answer that sounded completely plausible — but had nothing to do with how your company actually operates? Worse yet, the answer was delivered with such confidence that the error was hard to catch.

This is AI hallucination: language models generating information that sounds reasonable but is factually incorrect. According to multiple enterprise AI adoption studies, 85% of enterprise AI failure cases ultimately trace back to knowledge base quality issues, not model capability deficiencies. For enterprises, AI hallucination isn't just getting it wrong — errors produce business losses, customer complaints, and even legal liability.

The Three Root Causes of Hallucination

Solving AI hallucination requires understanding why it happens. In enterprise deployment scenarios, hallucination typically stems from three core problems.

Low-Quality Knowledge Bases

Many enterprises build their AI knowledge bases by bulk-uploading everything at once: badly formatted Word documents, scanned PDFs, unstructured spreadsheets, even informal chat logs. Low-quality input data directly produces low-quality AI output. A more common issue is knowledge fragmentation: related information scattered across multiple documents with no clear cross-referencing.

Missing Source Citation Mechanisms

Traditional RAG systems retrieve relevant document chunks and pass them as context to the language model, but many implementations don't tell the user where the answer came from. AI answers without source citations look identical to answers generated from thin air.

Outdated Knowledge Base Content

Enterprise policies, regulations, and processes change. When AI retrieves information from an outdated document, it hasn't technically lied — but for the user, the result is indistinguishable from hallucination.

Agentic RAG: The Technical Framework for Solving Hallucination

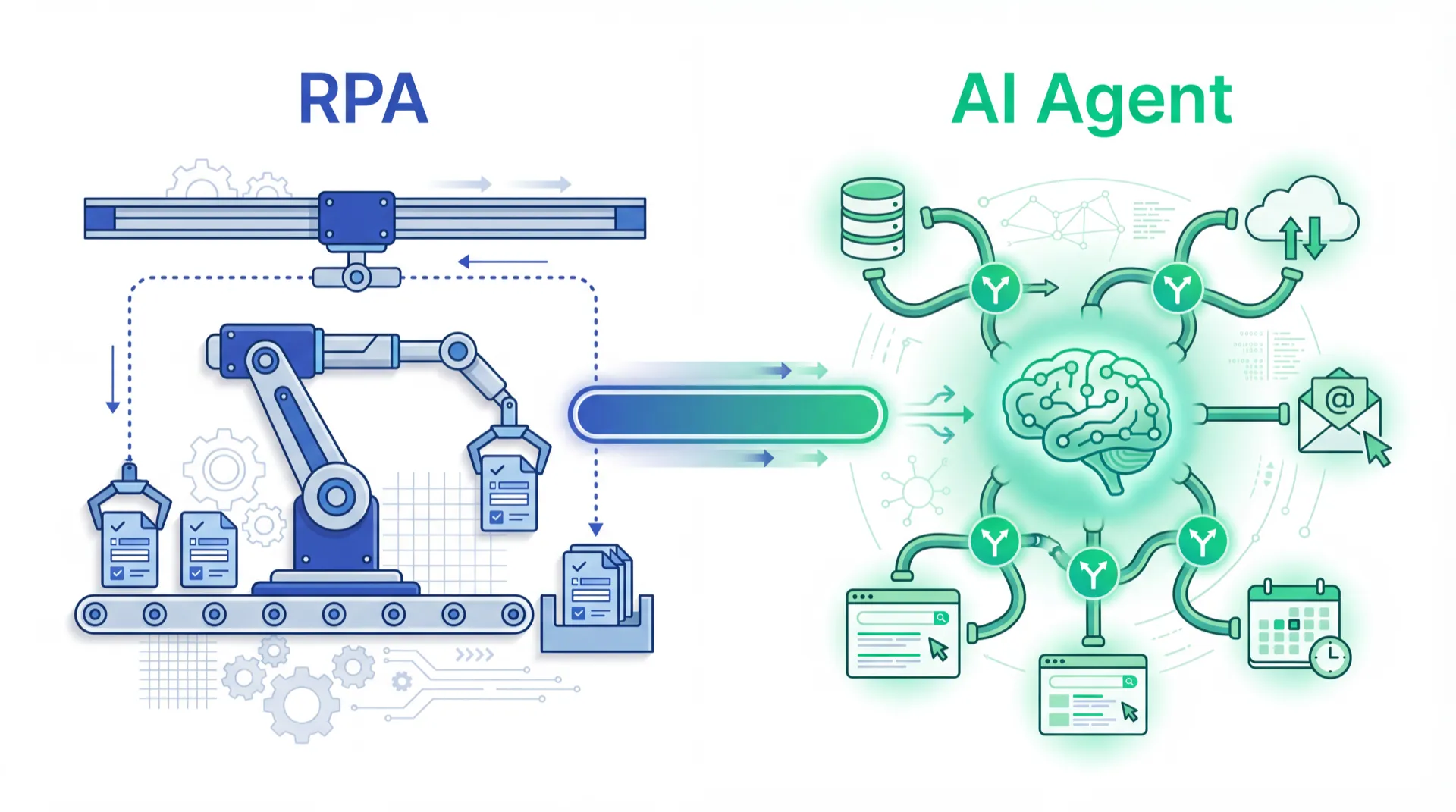

Traditional RAG follows a linear workflow: receive question → vector retrieval → generate answer. Agentic RAG introduces more intelligent processing mechanisms, with three key technical advances.

Retrieval Verification

Before generating the final answer, the system actively evaluates whether retrieved document chunks are genuinely relevant. Academic research shows Agentic RAG can reduce enterprise AI hallucination rates by 42% to 68%.

Confidence Scoring

The system calculates a confidence score for each generated answer. When scores fall below set thresholds, the system can explicitly tell users it lacks sufficient data, or trigger a human review workflow.

Source Citation

Requiring AI systems to explicitly cite sources in every response lets users verify answer accuracy, building rational trust. It also functions as a forcing mechanism: if the system can't find sufficient supporting documents, it must acknowledge this rather than generating an unverifiable response.

Knowledge Base Quality: The Underestimated Key Investment

70% of organizations are already using some form of AI knowledge management system, but the proportion that truly maintains high-quality knowledge bases remains low.

Structure-first. Convert unstructured data into structured formats with clear labeling of document type, scope, effective date, and version number.

Single source of truth. The same piece of knowledge exists in only one location, eliminating version conflicts.

Regular update mechanisms. Establish document expiration reminders and mandatory update workflows with shorter review cycles for regulatory documents.

Coverage awareness. Regularly analyze questions employees asked AI that AI couldn't answer — these are the knowledge base's blind spots.

MaiAgent AI KM: From Architecture to Practice

MaiAgent's AI KM integrates these technical principles into an enterprise IT-friendly product, using an Agentic RAG framework with built-in retrieval verification and confidence scoring. Every answer includes source citations precise to document name and paragraph location.

AI hallucination is not a problem to live with — it's the biggest obstacle between enterprise AI pilots and real business deployment. The solution isn't a better model. It's a better knowledge foundation.

Want to see how MaiAgent ensures every AI answer cites its source? Explore AI KM architecture →

Frequently Asked Questions

What's the main difference between Agentic RAG and traditional RAG?

Traditional RAG follows a linear workflow as a single pass. Agentic RAG introduces iteration and self-evaluation: the system can assess retrieval result quality, re-retrieve when unsatisfied, and self-verify generated answers.

Our knowledge base currently only has Word and PDF files. Can we use them directly?

Yes, but quality preprocessing before upload is recommended. Scanned PDFs need OCR processing; documents with heavy tables need special handling. MaiAgent AI KM provides pre-upload document quality assessment.

If the AI's confidence score is low, what should we do?

Three common strategies: set thresholds so low-confidence answers trigger human review; have AI explicitly mark uncertainty in its answers; automatically add low-confidence questions to a knowledge gap list as priority input for updates.

How large does the knowledge base need to be for effective enterprise AI?

Quality matters far more than quantity. A knowledge base with 200 high-quality, well-structured, regularly updated documents typically outperforms one with 2,000 inconsistently quality-controlled documents.